Science

Two ways to run research with Synthetic Users and why the difference matters

Iris, what is the difference of using agents to accelerate research.

Iris, what is the difference of using agents to accelerate research.

We recently ran the same research topic through two Synthetic Users workflows:

1. A regular research study

2. an identical research study with IRIS enabled.

We applied both workflows to an exploration of the experience of medical workers on the night-shift (very tricky cohort to recruit with traditional research methods), looking at how nights unfold, how energy and mood change, and how they use short breaks or downtime. The audience and goal were identical in both cases. What changed was how the research was guided.

Both used AI participants. Both produced strong insights. But the outputs felt very different.

The difference wasn’t automation. It was guidance.

Some of this difference showed up even in how the research was set up, including how synthetic users were configured and how much direction was applied during the process.

The same platform, two approaches: seeing what emerges first versus guiding the work earlier:

To IRIS, or not to. A question of guidance

1. Iris OFF

In this mode, the system explores more freely and you get: broad coverage, unexpected patterns, edge cases and nuance, and a rich view of the landscape.

Ideal when you’re asking: “What’s going on here?”

The tradeoff: the output can be dense and may require more synthesis.

2. Iris ON

In this mode, you actively steer the process and get: a clearer structure, stronger narrative, faster convergence, and insights that are easier to act on.

Works best when you’re asking: “What do we need to understand or decide?”

The tradeoff: less sprawl and more focus.

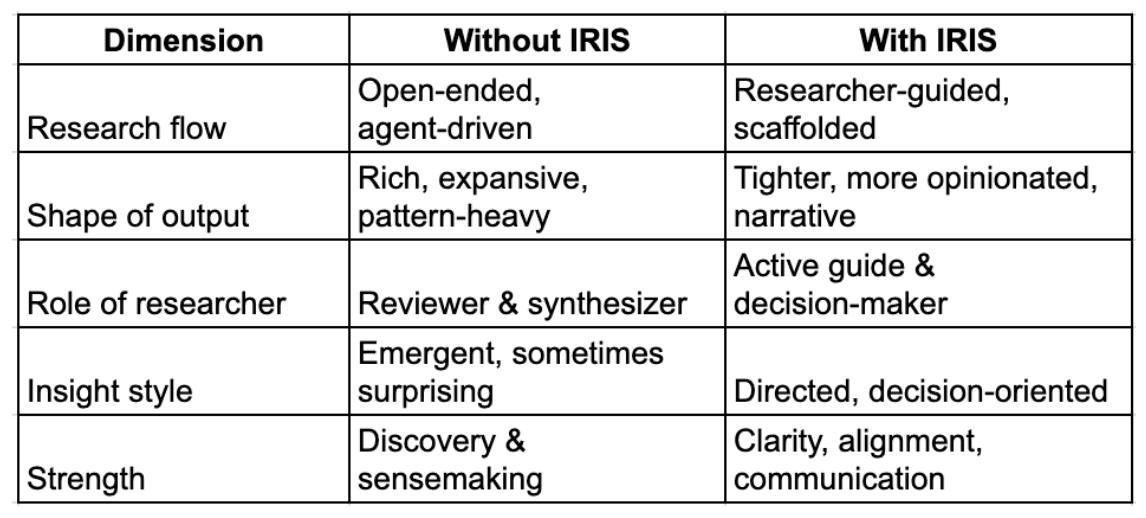

Here’s a side-by-side comparison:

Same system, different outcomes

What surprised us most was that both approaches surfaced many of the same core insights. What changed was how those insights were shaped.

- Exploratory preserves ambiguity, IRIS resolves it.

- One prioritises discovery while the other prioritises clarity.

Why this matters

As AI becomes native to research, the real question is not:

“Should research be automated?”

But:

“How much direction should we apply and when?”

Working with a guided research partner

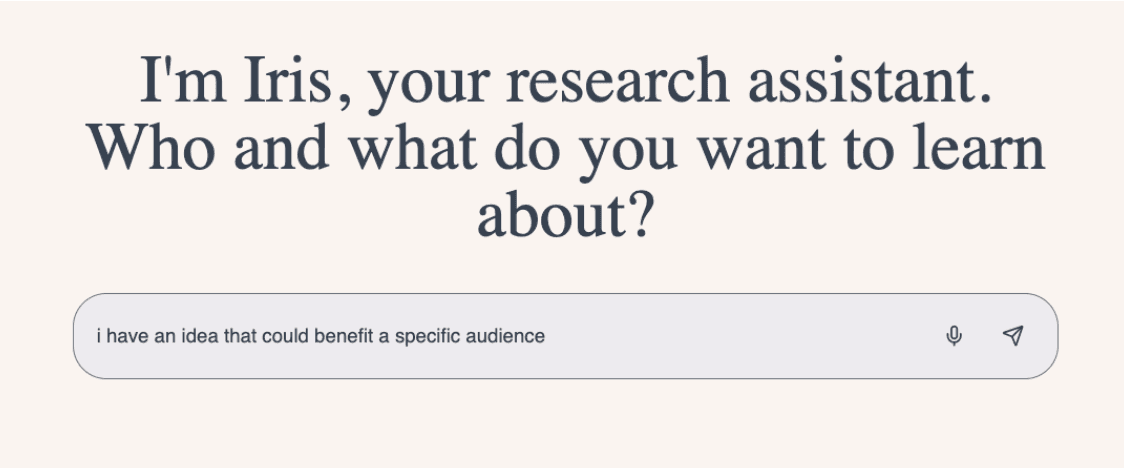

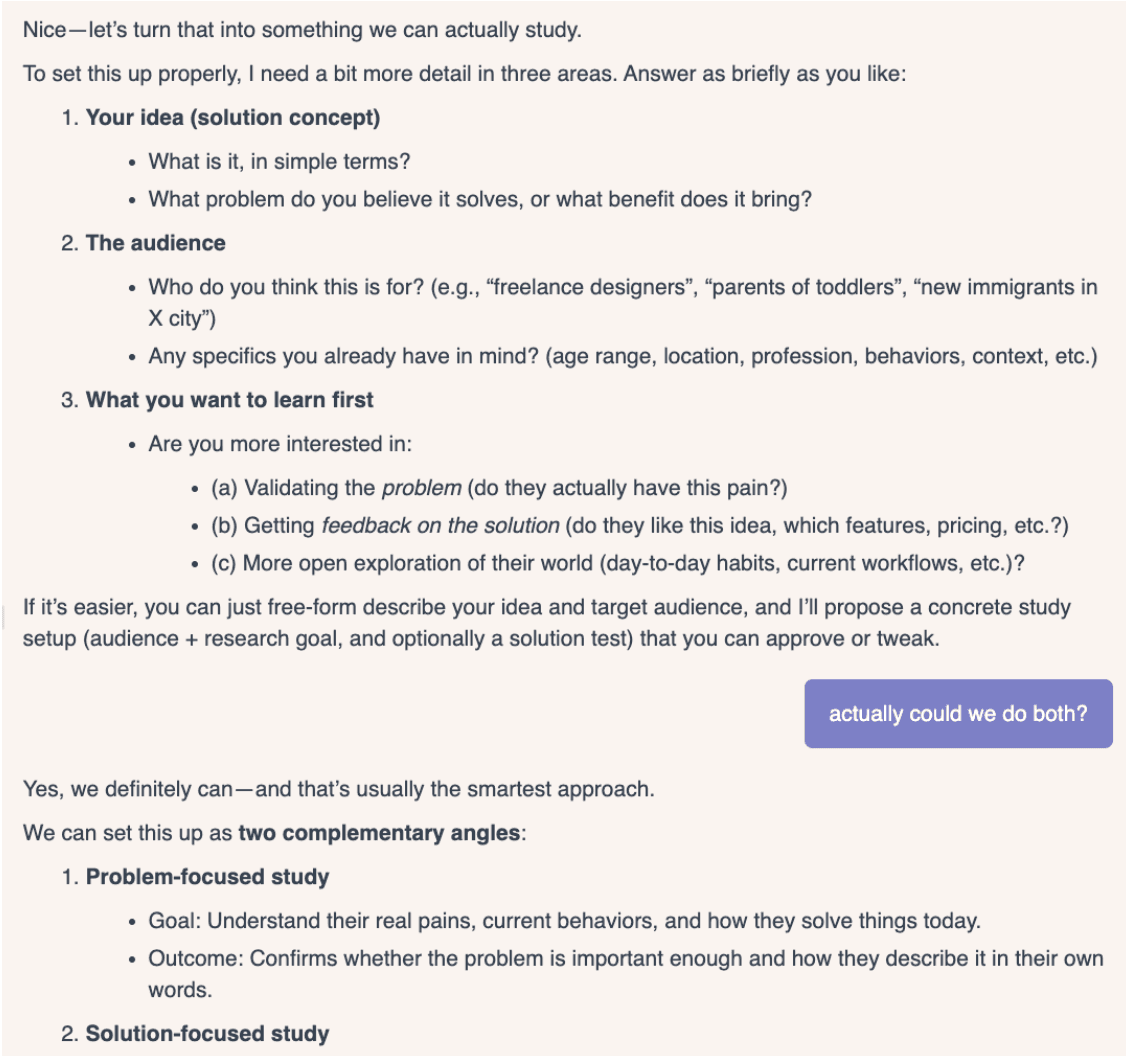

One thing that stood out in the workflow guided by IRIS was how different the starting point felt. Instead of needing a fully formed plan, we were able to begin with a rough idea and shape it through an ongoing conversation with IRIS.

That conversation wasn’t just about the research topic itself. We could ask questions about how to approach the work, get suggestions on where to dig deeper, and understand what was happening behind the scenes as the research evolved. IRIS acted less like a tool executing instructions and more like an experienced research partner helping pressure-test assumptions, suggest angles we hadn’t considered, and flag tradeoffs along the way.

As the work progressed, that guidance became more intentional. IRIS asked how we wanted the final report to be framed, what sort of stakeholders it was for, and whether there were specific areas of insight we wanted to emphasise. That made it easier to move from exploration to something more deliberate, without losing the richness of the underlying research.

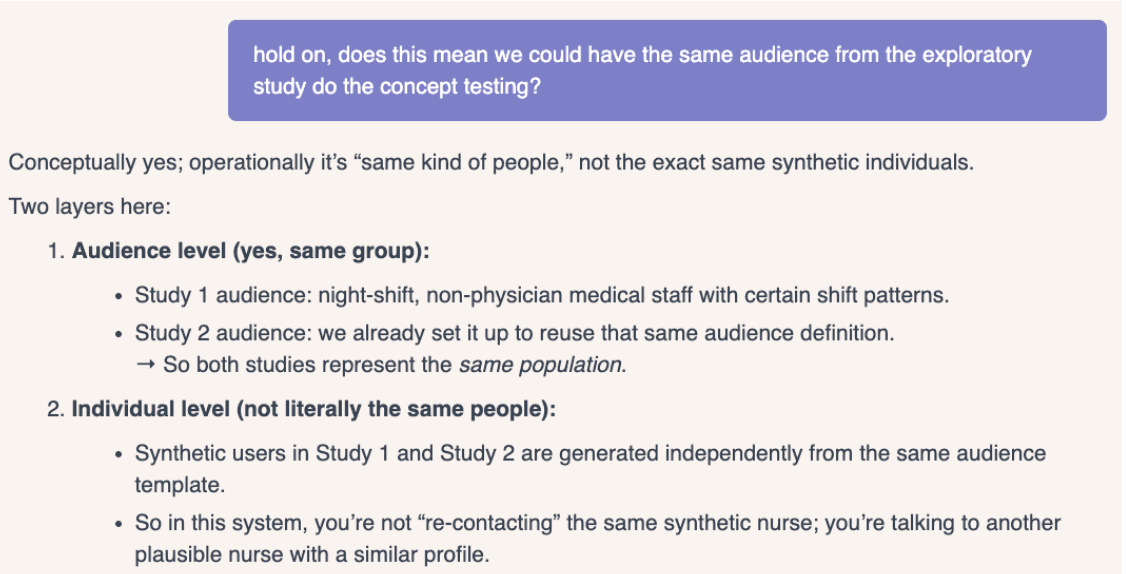

What we didn’t anticipate was what this made possible next. Another benefit of the guided workflow was how easy it was to extend the research. We ran a follow-up concept test using the same audience definition and asked IRIS to produce a combined report across both phases – something that would have taken many more steps without IRIS in the loop.

Along the way, it explained what was shared between the studies and what was newly generated, making the continuity and its limits clear.

Our takeaway

The future of research isn’t just agentic. It’s steerable.

With Synthetic Users, teams can choose when to let agents explore and when to guide them toward what matters most.