Science

Introducing Shuffle v2

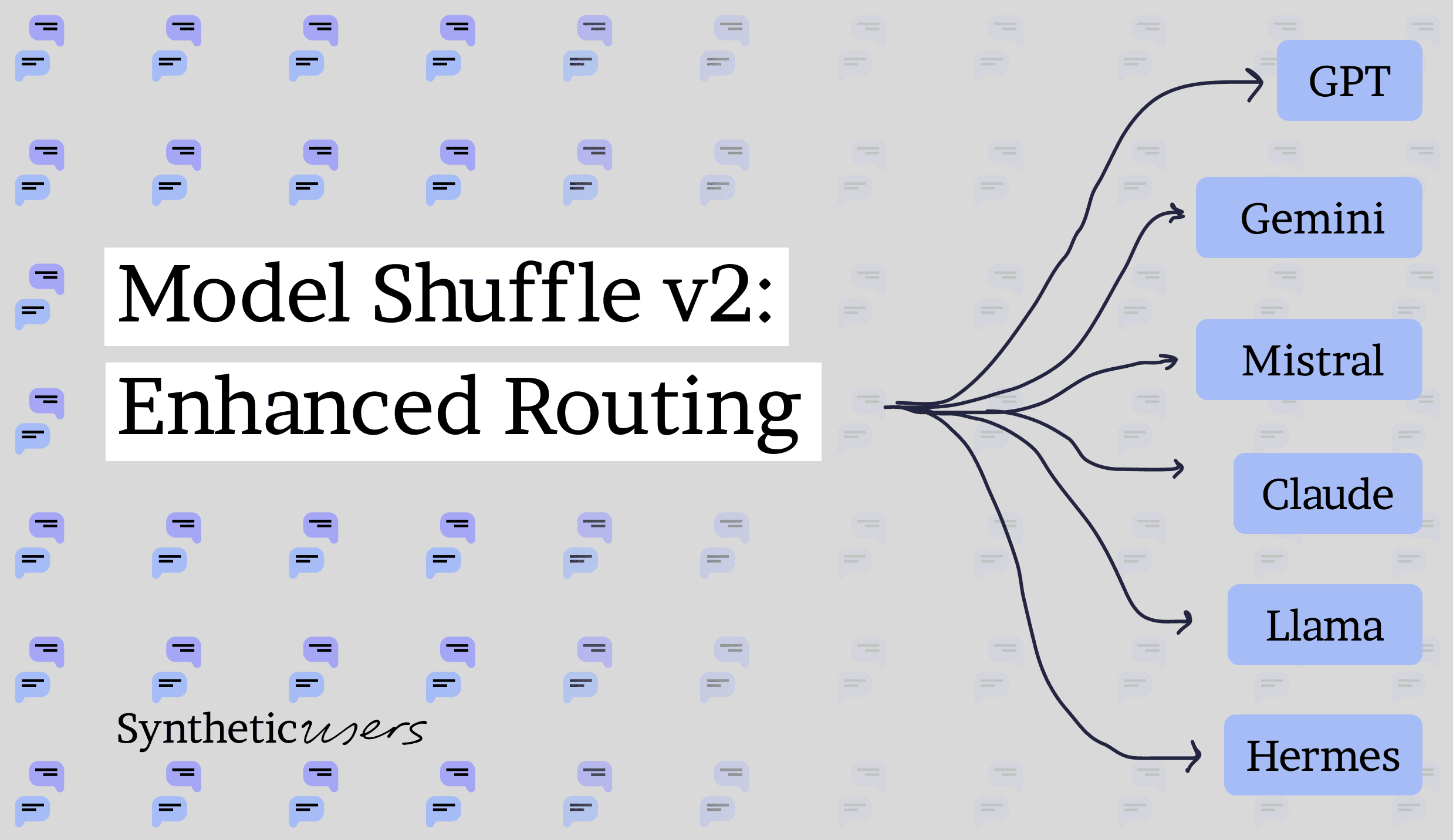

Shuffle v2 is a feature that intelligently shuffles between multiple large language models via a routing agent to produce more realistic, diverse Synthetic Users with better organic parity.

Shuffle v2 is a feature that intelligently shuffles between multiple large language models via a routing agent to produce more realistic, diverse Synthetic Users with better organic parity.

(Just because we use — frequently, does not mean this was written by a machine ;-)

At Synthetic Users, our core mission is to create AI-driven agents — Synthetic Users — that emulate real human behavior with remarkable fidelity (we call this SOP or Synthetic Organic Parity). In this sense we are diverging from those companies focused on Super Intelligence, simply because most of your customers or users aren’t going to be super intelligent. What we’re interested in is SOP and as such we’ve had to develop a new architecture to leverage LLMs in different ways.

Each new model brings its own set of capabilities, biases, and linguistic signatures. As we strive for SOP — the point at which Synthetic Users act, react, and communicate in ways indistinguishable from real humans — our research has shown that relying on any single LLM imposes inherent limitations on realism.

That’s why we’re excited to announce Shuffle v2, a step forward in our approach to synthesizing behavior by intelligently shuffling between different large language models. This update seeks to deliver more realistic, varied, and contextually accurate Synthetic Users by leveraging the strengths of multiple LLMs simultaneously.

Why Shuffle?

Different language models are trained on different datasets, apply distinct architectures, and adopt divergent training objectives. Consequently, each LLM exhibits unique biases and excels at mimicking certain behavioral niches better than others. By rotating among multiple models: such as GPT, Gemini, Mistral, Claude, Llama, Hermes etc...— we harness the diversity of these models’ training histories.

Heterogeneous Biases, Balanced Output

All machine learning models have internalized biases in their weights based on the data they were trained on. By leveraging several models in tandem, we are effectively combining different “perspectives.” This reduces overfitting to any one model’s bias and results in Synthetic Users whose behaviors are more robust and closely aligned with the multifaceted nature of real human populations.

Parallel to Ensemble Methods

In broader machine learning research, combining multiple models to improve performance is a well-known technique—often referred to as ensemble learning. As noted by Dietterich (2000) in his paper “Ensemble Methods in Machine Learning,” ensemble approaches improve generalization and reduce variance. Shuffle v2 takes this principle a step further by intelligently selecting or routing which models participate in generating specific aspects of Synthetic User behavior.

How Shuffle v2 Works

Shuffle v2 we utilize a lightweight routing agent that “learns” how to delegate tasks to the most suitable model—or combination of models—based on the target audience or behavioral pattern we aim to replicate.

Behavioral Goal Definition

We start by defining the target behavioral profile of the Synthetic User population. Are we trying to recreate tech-savvy early adopters? Or older demographics with different linguistic nuances? By capturing these requirements, Shuffle v2 can determine which models (or ensembles of models) are likely to yield the best results.

Contextual Filtering

Each LLM is evaluated for its strengths in a specific demographic or psychographic niche. For instance, Model A might excel in short, factual answers, while Model B produces more conversational, empathetic responses. The routing agent maintains a knowledge base of such performance attributes.

Adaptive Model Selection

Using a scoring or ranking algorithm influenced by Reinforcement Learning—see Sutton & Barto (2018) “Reinforcement Learning: An Introduction (2nd ed.)”—the routing agent routes each request (or each piece of a longer synthetic conversation) to the model(s) that best fit the criteria for that user or conversation. Over time, this agent adapts to changing data distributions, improving routing accuracy.

Synthesis & Validation

The selected models generate candidate responses. These outputs are then merged or curated (in certain scenarios) to avoid contradictory or repetitive statements. A final validation step ensures that each Synthetic User’s overall behavior remains coherent.

Feedback Loop

After platform deployment (every month), we run SOP tests to assess how closely the Synthetic Users match the organic user dataset. The results of these tests get fed back into the routing agent, helping it refine future decisions.

Scientific Underpinnings

Mixture of Experts

Shuffle v2 builds on the concept of mixture-of-experts approaches, where multiple specialized models (“experts”) tackle sub-problems and a “gating network” decides which model to use. For example, Google’s Switch Transformer (Fedus, Zoph, & Shazeer, 2021) in their paper “Switch Transformers: Scaling to Trillion Parameter Models with Simple and Efficient Sparsity” demonstrates how a gating mechanism can dynamically route tasks to different model components.

Robustness via Ensemble Diversity

Kuncheva & Whitaker (2003) discuss “Measures of diversity in classifier ensembles and their relationship with the ensemble accuracy” and demonstrate that model diversity is a key factor in effective ensemble systems. By shuffling among models, we increase the diversity of possible outputs, thus stabilizing the behavioral distribution of our Synthetic Users.

Ensemble Based Decision Making

He, J., Zhou, X., Zhang, R., & Yang, C. in “An ensemble learning framework based on group decision making” (Publisher: IEEE) provide evidence that ensemble-based strategies can improve decision reliability. Shuffle v2 adapts these findings to the generation of more varied and realistic Synthetic User behaviors.

Looking Ahead

Shuffle v2 is a significant leap toward bridging the gap between synthetic and organic user behavior. In the near future, we plan to:

Expand the Model Pool: Incorporate more specialized models (e.g., domain-specific LLMs for finance, healthcare, etc.) to further refine Synthetic User authenticity.

Enhance Real-Time Adaptability: Introduce automated retraining pipelines for the routing agent to handle shifts in linguistic trends or user behaviors.

Integrate Deeper Behavioral Markers: Beyond just language, we aim to include non-textual cues (reaction times, sentiment patterns, etc.) so Synthetic Users can more faithfully reflect real-world human variability.

By enabling Shuffle v2, organizations can model user behavior with greater nuance, gain deeper insights into diverse audience segments, and develop more reliable prototypes for user interactions. We’re excited to see how Shuffle v2 transforms your experience with Synthetic Users—and paves the way for ever more authentic AI-driven emulations of human behavior.

References & Further Reading

Dietterich, T. G. (2000). Ensemble Methods in Machine Learning. International workshop on multiple classifier systems, (pp. 1–15).

Fedus, W., Zoph, B., & Shazeer, N. (2021). Switch Transformers: Scaling to Trillion Parameter Models with Simple and Efficient Sparsity. arXiv preprint arXiv:2101.03961.

Kuncheva, L. I., & Whitaker, C. J. (2003). Measures of diversity in classifier ensembles and their relationship with the ensemble accuracy. Machine Learning, 51(2), 181–207.

Sutton, R. S., & Barto, A. G. (2018). Reinforcement Learning: An Introduction (2nd ed.). MIT Press.

He, J., Zhou, X., Zhang, R., & Yang, C. An ensemble learning framework based on group decision making. Publisher: IEEE.