Gartner says we lead. That's kind of them.

Gartner’s latest report on AI-powered synthetic user research cites Synthetic Users as a leader.

When Gartner mentions your company among as a leader in a new category, you stop, smile and ask the team if they’ve had enough coffee today—because our pace is apparently showing.

Gartner’s report is clear: the next wave of user insight will come from our ability to replicate 'humaness' and all its human preferences, not endless scheduling calls.

What Gartner noticed

Gartner highlights three observations that made us nod (and maybe high‑five):

Speed without sacrifice. Traditional research is a bottleneck; AI agents can run ten interviews, score them and surface patterns in minutes, not months.

85 % behavioural fidelity. Stanford HAI demonstrated that interview‑based agents reproduce real decision‑making with 85 % accuracy—good enough to move product discovery forward with confidence. Our own parity is higher but 85% is a good baseline.

Dollars back to the roadmap. The study that cost organic researchers thousands of dollars, cost cents when run with Synthetic Users. Multiply that across quarterly sprints and the savings are obvious.

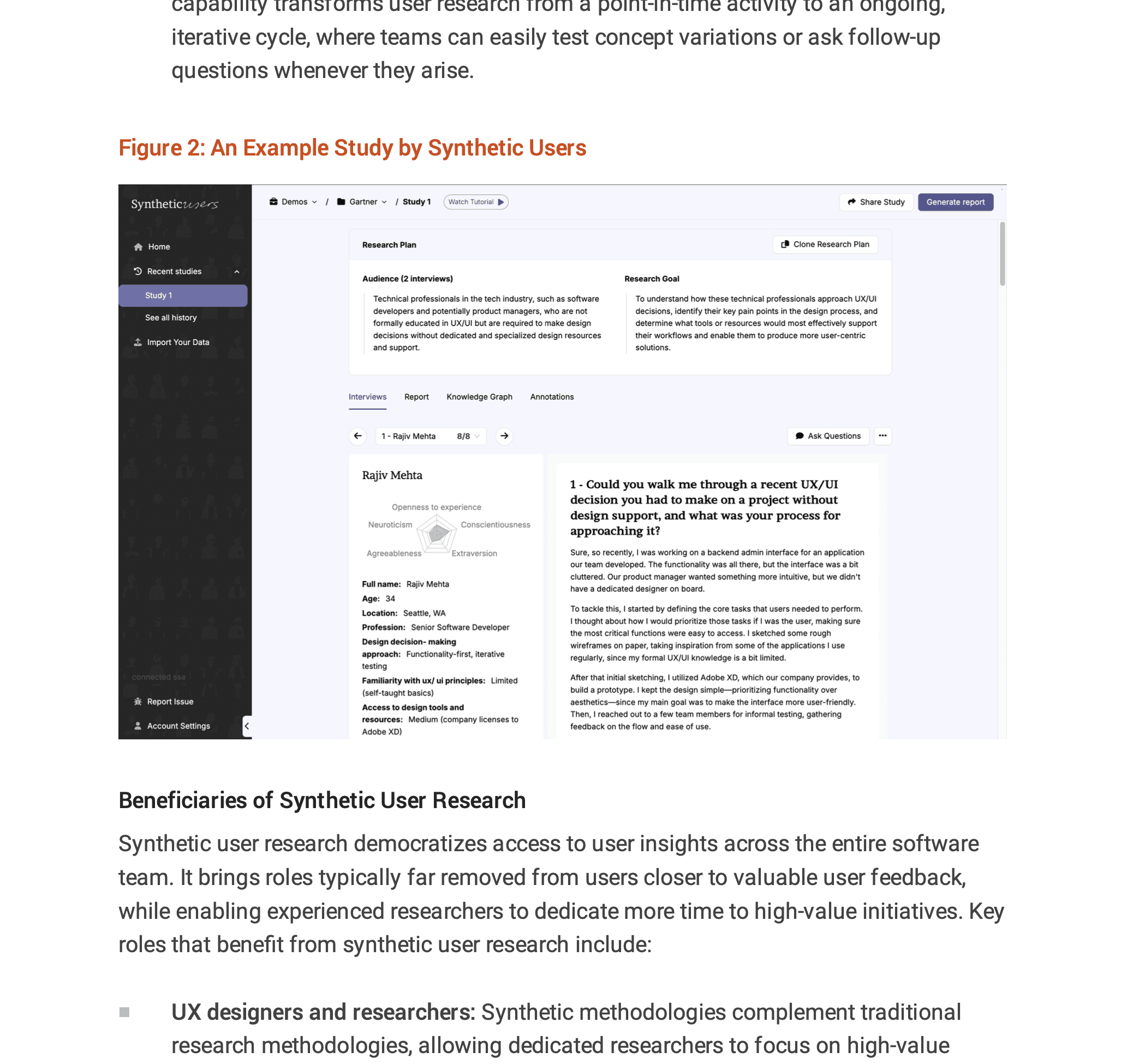

On page 7, Figure 2 even screenshots a Synthetic Users study to illustrate how instant, iterative dialogue works at scale. Nice choice, Gartner.

How we got here

Synthetic Users was never about replacing researchers; it was about reclaiming their time. Since launch we’ve:

Codified qualitative depth. Firstly our reptilian brain approach, where we inject every Synthetic Users with its unique set of behavioural drivers. Then our knowledge‑graph architecture stores rich interview transcripts as agent memory, matching Gartner’s recommended best practice for accuracy.

Baked in skepticism. Adopt a skeptical yet curious mindset. With the Synthetic / Organic parity we offer you can start making some decisions as you get to first-insight very quick.

Democratised access. Engineers, PMs and CLevel… can all get insight instantly.

What this means for enterprises

Accelerated decision‑making across divisions. Marketing, CX, product and ops can all validate assumptions instantly thanks to always‑available Synthetic Users.

Continuous discovery becomes real. Insight streams stay open 24/7, so strategies evolve with live data—not last quarter’s anecdote.

Inclusive perspective stays central. The same drop‑down breadth of Synthetic Users ensures every business unit hears voices beyond the usual core markets.

More shots on goal. Rapid feedback loops let teams iterate campaigns, journeys and features long before budget lock‑in.

Of course, synthetic research isn’t a silver bullet (not yet) — and we agree with Gartner’s caution on over‑reliance. Waymos still have steering wheels, pretty soon they won’t.

Science Corner data & gratitude

Before we sign off, a note on context: this piece lives in our Science Corner, where we dissect claims with numbers, not adjectives. So here are the metrics that undergird today’s highlights (source: internal usage telemetry, Jan–May 2025):

Over 30,000 synthetic interview sessions run by customers.

11 distinct industries served.

Median insight turnaround: 6 min 12 sec from research plant to report.

Average cost per study: $11 vs. ≈ US$ 200 for comparable organic panels.

To every team who logged issues, shared transcripts and trusted synthetic voices: thank you. Your curiosity fuels the research that fills this corner of the blog.

And here's Gartner's study from June 2025 (behind a paywall).

Releated Articles

More articles for you

Teaching Synthetic Users What Real People Actually Think

Synthetic Users without calibration are individually believable, but collectively wrong. The missing piece is calibration, not better models.

The Lie We Tell Ourselves About Customer Research

Most research asks what people say. The problem is people don't do what they say. This piece breaks down the gap between stated and revealed preference — and why behavioral modeling, not better interviews, is how you close it.

Two ways to run research with Synthetic Users and why the difference matters

Iris, what is the difference of using agents to accelerate research.

Synthetic Users vs digital twins

You don’t need a twin for “a parent in rural Ohio who shops weekly at Walmart, prefers fragrance-free, and has a toddler with eczema.” You sample a parent profile with relevant traits and constraints, add retail and dermatology context, and generate behaviors consistent with both.

Two major papers. One shared direction.

LLM-powered Synthetic Users have crossed from concept to validated method. This proves they can predict human behavior accurately, letting teams run fast, low-cost behavioral experiments without replacing real participants.

Gartner says we lead. That's kind of them.

Gartner’s latest report on AI-powered synthetic user research cites Synthetic Users as a leader.

Introducing Shuffle v2

Shuffle v2 is a feature that intelligently shuffles between multiple large language models via a routing agent to produce more realistic, diverse Synthetic Users with better organic parity.

Chain-of-feeling

Synthetic Users use a “chain-of-feeling” approach—combining emotional states with OCEAN personality traits—to produce more human-like, realistic user responses and yield richer UX insights.

Generative Agent Simulations of 1,000 People

A paper that thoroughly executes a parity study between Synthetic and Organic users.

21 Peer reviewed papers that support the Synthetic Users thesis

Here is a compilation of all the papers that help make a case for Synthetic Users.

Why we shuffle between models — to ensure both parity and diversity!

Synthetic Users balances aligned and unaligned models to maintain diversity and authenticity in simulated users while ensuring ethical standards and user expectations are met.

Latest press articles for Synthetic Users

Synthetic Users and AI are transforming research methodologies, offering innovative, cost-effective alternatives to traditional human subject studies.

Comparison studies. The opportunity lies in the deviation.

When we compare different studies, especially looking at what synthetic (artificial interviews) and organic (real-world interviews) data tell us, we often find they mostly talk about the same things but there's also a bit where they don't match up. This gap is super interesting because it's like finding hidden treasure in what we thought we knew versus what we might have missed.

How we deal with bias

Harnessing the power of AI in our Synthetic Users, we strive for a balance between reflecting reality and ethical responsibility, ensuring diversity and fairness while maintaining realism.

The transition to Continuous Insight

The transition towards Continuous Insight™ aligns research activities more closely with the dynamic needs of the business and ensures that product development is continuously informed by up-to-date user insights.

The Art of the Vibes Engine

Large language models (LLMs) like GPT-4 serve as powerful "vibes engines," empathizing with diverse groups and generating contextually relevant content. Their applications span market research, customer support, user experience design, and mental health support, offering invaluable insights and personalized experiences. While not infallible sources of truth, LLMs enable creativity, personalization, and connection within the realm of human language.

There is a faster and more accurate way to do research. Use Synthetic Users.

How Synthetic Users is changing the research process.

The wisdom of the silicon crowd

In the light of an ancient parable, we explore a new paper that dives into how ensembles of large language models match the prediction accuracy of human crowds. It reveals that combining machine predictions with human insights leads to the most robust forecasting results.

Three research papers that helped us build ❤️ Synthetic Users

For the sceptics amongst us who need more tangible research in order to engage with this brave new world. Full disclosure: we are part of the sceptics.

What is RAG and why it’s important for Synthetic Research

Ahead of our RAG launch we explain Retrieval-Augmented Generation (RAG) and how it enhances Synthetic Users by providing increased realism, contextual depth, and adaptive learning, with profound implications for market research, user experience testing, training, education, and innovative product development.

Synthetic Users system architecture (the simplified version).

Foundation models underpin Synthetic Users with advanced capabilities, enhanced by synthetic data and RAG layers for realism and business alignment, all within a collaborative multi-agent framework for richer interactions.

Saturation score. How do we know how many interviews to run?

Determine your interview target for achieving topic saturation using our efficient approach, leveraging the historical wisdom of research pioneers. This method ensures deep insights with theoretical sampling at its core.

How Synthetic Users are gaining depth

Synthetic Users are evolving to address criticism about their generalist nature by incorporating representative data sets and personal narratives.

How we compare interviews to ensure we improve our Synthetic Organic Parity — 85 to 92%

How do we know we are right? How do we know our Synthetic Users are as real as organic users? We compare.

Synthetic Users: Merging Qualitative and Quantitative Research, in seconds.

At Synthetic users we are blurring the lines between qualitative and quantitative research. Here's how we are going about this transformative approach.