Science

Building Synthetic Users with "Guts First": Why Logic Isn't Enough

To build synthetic users that truly act like people, we must stop chasing perfect logic and start engineering the "gut" instincts and emotional biases that drive real human decisions.

To build synthetic users that truly act like people, we must stop chasing perfect logic and start engineering the "gut" instincts and emotional biases that drive real human decisions.

At Synthetic Users, our job is simple to say and hard to do: replicate human behaviour well enough that teams can run user research faster, cheaper, and earlier.

We do that today with Large Language Models (LLMs), retrieval systems, and careful prompting. This gets you surprisingly far. But there’s a deeper scientific reason why we believe the field can go further, and why the next leap isn’t “more reasoning” but better guts.

This post ties together three threads:

Damásio’s neuroscience: Why emotion is the engine of choice, not a distraction from it.

The "System 1" Trap: Why the AI industry is building "smart" models when researchers need "human" models.

The Solution: How synthetic users can be engineered to have "gut feelings" so they don't just act logical but act real.

1. Damásio: Emotions are programs, feelings are the music

To understand why users behave the way they do, we look to neuroscientist Antonio Damásio (a personal hero of ours and it's not because he is Portuguese). He makes a critical distinction that most people miss:

Emotion is a largely automatic action program. It is the body preparing to act (fight, flee, freeze, approach). These changes happen to your nervous system before you are even aware of them.

Feeling is the conscious experience of those changes. It is the mental "music" playing in your head after the orchestra (your body) has already started.

The "Somatic Marker" (The Gut Check)

Damásio’s Somatic Marker Hypothesis argues that our bodies "tag" situations with value. When you face a choice, these markers fire as fast "gut signals" that bias your decision before you start logically analyzing the pros and cons.

If you damage the part of the brain that handles these markers, you don’t lose IQ. You lose the ability to make decisions. You can list the pros and cons of a washing machine for three hours, but you can't pick one.

Why this matters for UX Research and Synthetic Users:

Most products don't fail because users can't logically explain features. They fail because users:

Say they want X, but impulsively choose Y.

Bounce instantly because the UI "felt off."

Over-index on one bad memory (bias).

Buy a product because it matches their identity, not its utility.

These are Somatic Marker behaviours. They aren't rational calculus; they are emotional biases. If we want Synthetic Users to behave like your real customers, we can't ignore this layer.

2. The Trap: Why "Smarter" AI isn't always "Better" AI

Andrej Karpathy (and originally Daniel Kahneman) popularized a framework that is essential here:

System 1: Fast, automatic, intuitive, emotional.

System 2: Slow, deliberate, calculating, logical.

The Industry Sprint to System 2

Right now, the AI industry is obsessed with making models smarter (System 2). They are building methods like "Chain-of-Thought" (forcing the AI to think step-by-step) to solve math problems and write code.

The Synthetic User Problem

This is great for a coding assistant, but it can be fatal for a synthetic user.

If you simply scale up System 2 reasoning, you get a hyper-rational agent.

Imagine a synthetic user evaluating a sketchy Fintech app:

A "System 2" AI might say:

"The interest rate is 2% higher than the market average, and the onboarding flow is efficient. I will sign up."

A Real Human (System 1) might say:

"The logo looks cheap and they asked for my SSN too fast. I don't trust it. I'm out."

The real human reaction happens in under two seconds. It’s a gut check. If synthetic users are too logical, they will accept products that real humans would reject. We don't just need smart models; we need models that can simulate human irrationality.

3. How to make AI "Human-System-1"

The goal isn't to build a biological brain, but to build a system that replicates human preference patterns. To do that, the AI architecture needs "guts."

Here are the practical levers that could be used to achieve this:

3.1 Fine-tuning for "Snap Judgments"

Humans don't always deliberate. We often choose by instinct. To mimic this, models can be trained on data that reflects rapid categorization and "first answer that comes to mind" scenarios. This nudges the model away from writing a dissertation and toward giving a reaction.

3.2 Bounded Rationality (Simulating Laziness)

The human brain is an energy-miser. We don't solve a logic puzzle every time we buy toothpaste.

This can be mimicked in AI by limiting the "compute" budget for certain decisions. By forcing the model to answer quickly without "thinking/revising" too deeply, you get outputs that rely on heuristics (mental shortcuts)—just like a tired user shopping on their phone at 8 PM.

3.3 Affect and Drives (The "Mood" Layer)

Humans don't just think in words; we think in states (anxious, bored, excited).

A "guts-first" model would ideally possess a dynamic internal state based on established psychological dimensions (like the Circumplex Model of Affect):

Valence: Is this positive or negative?

Arousal: Am I calm or energized?

Example Scenario:

State A (High Trust/Excitement): The user is in a "gain-seeking" mode. They might overlook typos in a UI and click "Buy."

State B (Anxiety/High Arousal): The user is in "risk-avoidance" mode. The exact same UI typos now trigger a "scam" flag, and they leave.

By maintaining this "mood state" across a session, a synthetic user would behave consistently. They wouldn't just analyze a product; they would react to it based on their simulated emotional state.

3.4 Persona + Memory

Gut instincts are shaped by history. To be believable, a synthetic user needs a persistent memory of "past experiences" to form biases. If a synthetic user "had a bad experience" with a subscription model in its backstory, it should instinctively hesitate when it sees a "Subscribe" button today.

4. The "Guts First" Stack

If we take Damásio seriously, we can't just slap a persona on a chatbot. A truly effective synthetic user stack needs:

The Habit Engine (System 1): A fast, biased model that reacts to stimuli based on persona and "mood."

The Rationalizer (System 2): A slower layer that steps in only when the task is novel or difficult, or used to justify the gut decision after the fact.

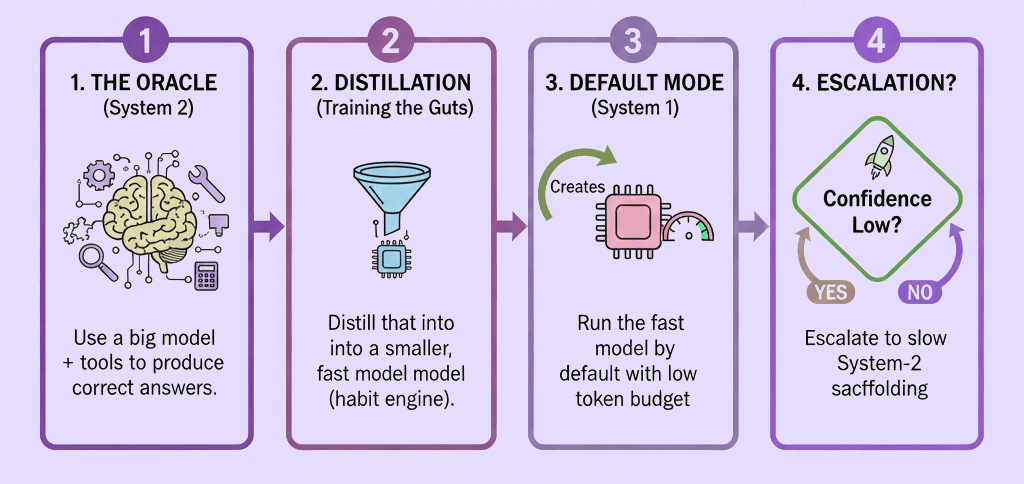

Here’s a practical end-game:

Use a big model + tools to produce correct answers. Shuffle between models if you can.

Distill that into a smaller fast model (habit engine).

Run the fast model by default with low token budget.

Escalate to slow System-2 scaffolding only when confidence is low.

This is a first pass at mirroring human cognition: Gut first, reasoning second.

A quick GPT Summary for those who skip to the end

Damásio was insightful: Emotion isn't the enemy of decision-making; it's the foundation of it.

The Industry Gap: While the world rushes toward hyper-logical AI, user research requires human-like, biased, emotional AI.

The Path Forward: By engineering "somatic markers," affective states, and bounded rationality, the industry can move toward synthetic users that don't just think like your customers: they choose like them.

References & Further Reading

On Neuroscience & Emotion

Damásio, A. (1994). Descartes' Error: Emotion, Reason, and the Human Brain. (Foundational text on the Somatic Marker Hypothesis).

Damásio, A. (1999). The Feeling of What Happens: Body and Emotion in the Making of Consciousness.

Russell, J. A. (1980). "A Circumplex Model of Affect." Journal of Personality and Social Psychology. (The source for Valence/Arousal modeling).

On AI & System 1/2

Kahneman, D. (2011). Thinking, Fast and Slow. (The origin of the System 1 vs 2 framework).

Karpathy, A. Public talks on LLM psychology and the "OS" analogy. Karpathy.ai

Wei, J., et al. (2022). "Chain-of-Thought Prompting Elicits Reasoning in Large Language Models." NeurIPS. (The academic basis for "forcing" System 2 logic).

Simon, H. A. (1955). "A Behavioral Model of Rational Choice." (The origin of "Bounded Rationality"—essential for understanding user limitations).