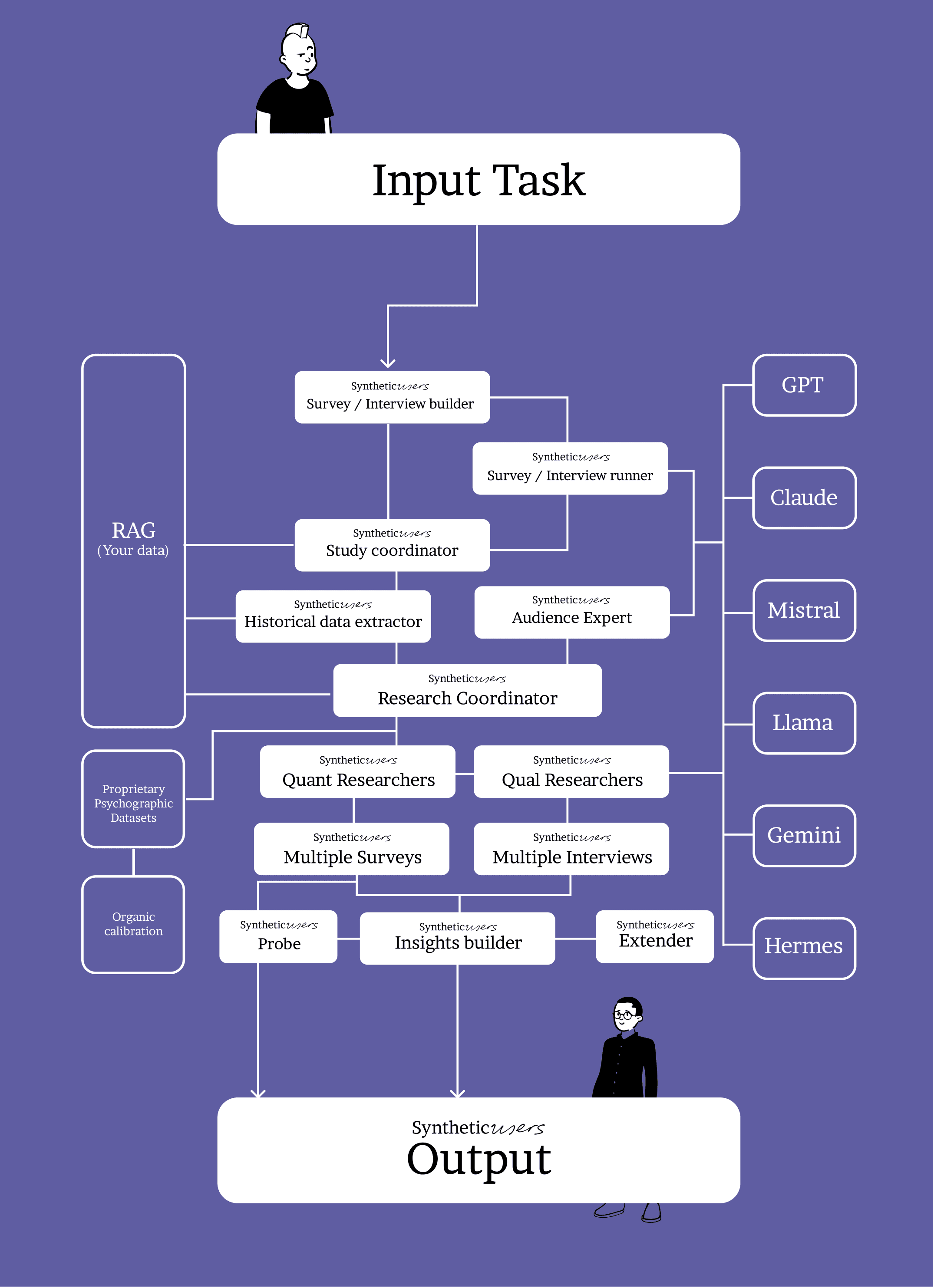

Synthetic Users system architecture (the simplified version).

Foundation models underpin Synthetic Users with advanced capabilities, enhanced by synthetic data and RAG layers for realism and business alignment, all within a collaborative multi-agent framework for richer interactions.

TL;DR

But why shouldn't I go directly to a GPT like Claude, or Gemini? Because going straight to “a GPT” gives increasingly hyper-rational answers that don’t read like real (Organic) customers. People are smart, but they use shortcuts and are influenced by subconscious drivers. We fix that by:

routing across an ensemble of LLMs,

applying personality remapping (behavior → OCEAN) calibrated to real-world distributions,

grounding answers in your data via RAG, and

using specialized agents (Planner → Interviewer → Critic/Reviewer) with a feedback loop that tunes routing, prompts, and calibration over time.

For why this isn’t “digital twins,” see Synthetic Users vs Digital Twins: https://www.syntheticusers.com/science-posts/synthetic-users-vs-digital-twins

Why this architecture (in one screen)

Multi-agent = better interviews. Different agents collaborate and critique, lifting realism and depth without hand-holding.

Ensemble over a single model. Models have different strengths and biases; routing/aggregation reduces skew and increases reliability.

Grounded in your data. RAG adds your facts at answer-time, so outputs stay specific and current without retraining.

Reality-checked. We benchmark against organic interviews (SOP) and encourage customers to run their own comparisons.

Not “digital twins.” We model behavioral spaces (traits × context × knowledge), not 1:1 replicas. It scales and stays fresh.

The pipeline (brief, practical)

1) Router + LLM ensemble

We’re model-agnostic. A lightweight router selects—and sometimes sequences—multiple LLMs (and can aggregate outputs). This hedges the failure modes of any single model and improves realism.

What you control: the task (“evaluate onboarding flow”), audience hints, and constraints (jurisdiction, tone, risk).

What we adjust: model choice/order, temperature, aggregation, and guardrails.

2) Personality remapping (OCEAN-calibrated)

We don’t just generate a random OCEAN profile. We remap behavior to personality and calibrate to real populations:

Behavior → trait signals. We ingest proprietary behavioral datasets (e.g., purchasing frequency/recency, content categories viewed, session patterns) and map them to trait evidence.

Population calibration. We fit the resulting OCEAN distributions to match the real world for each target market/cohort. If our synthetic distribution drifts, we re-weight and remap.

Cohort priors. Geography, industry, and segment priors shift the sampling so your “Synthetic Users” resemble the actual mix of personalities you’d meet in that audience.

Per-study sampling. For each study, we sample personas so the set reflects the calibrated distribution (not just plausible one-offs).

Ongoing checks. We track coverage, drift, and parity against organic interviews and adjust remapping as needed.

Short version: OCEAN is the language, but calibration is the guarantee that our personalities line up with the organic world.

3) Your data via RAG (not fine-tuning)

At answer-time we retrieve facts from your interviews, surveys, CRM notes, product/docs and ground responses. No retraining required; updates flow through immediately. (Fine-tuning, when used, is separate and not required for grounding.)

4) Agents that do the work

Planner — turns your research goal into an interview plan.

Interviewer — runs the script, asks natural follow-ups, stays on brief.

Critic/Reviewer — checks realism, gaps, contradictions; triggers re-asks.

Router — optimizes LLM selection/ordering across the session.

Agents coordinate and learn from outcomes rather than relying on one monolithic prompt.

5) Feedback loop

We capture misses, contradictions, weak coverage, parity deltas, and calibration drift. That data updates routing, prompt templates, and personality remapping so interviews get sharper on your audience and edge cases.

Worked example (concrete)

Task: “Clinical interview with an oncologist in Berlin about trial enrollment UX.”

Router favors models strong on clinical context and formal tone.

Personality remapping samples a profile consistent with the real-world distribution for German hospital specialists (e.g., high conscientiousness, risk-aware, time-constrained), derived from our calibrated behavioral model.

RAG pulls your oncology guidelines, past interview notes, and relevant policy constraints.

Interviewer probes consent flow, eligibility filters, EMR hand-offs.

Critic flags missing questions on adverse-event reporting and multilingual support; re-asks.

Feedback updates routing and nudges remapping if we see systematic gaps for this cohort.

What this buys you

Speed: minutes to realistic interviews and to a solid report.

Coverage: multiple personas/contexts fast, without enumerating “twins.”

Change-tracking: when policies/copy change, RAG picks it up without retraining.

Bias control: ensemble + critic reduce single-model skew.

Reality checks: SOP comparisons keep us honest; calibration keeps personas aligned to the organic world.

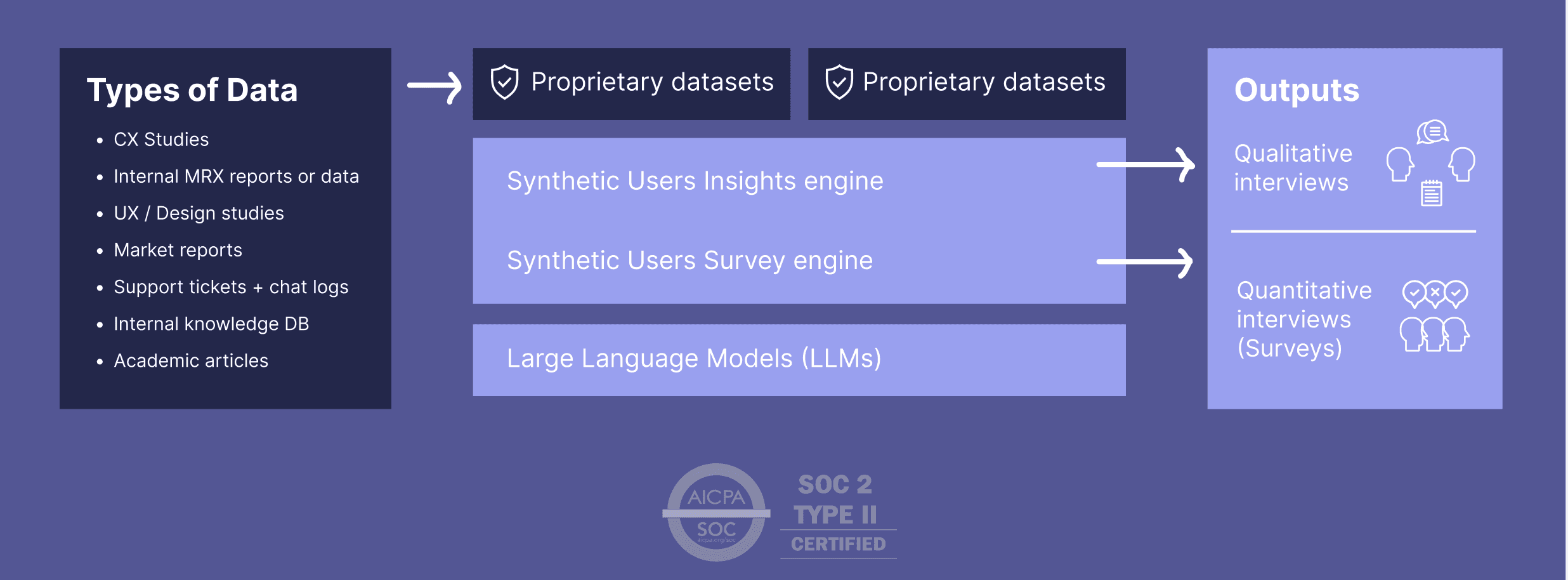

RAG

The RAG layer plays a pivotal role in tailoring Synthetic Users to specific business needs by integrating domain-specific knowledge bases. This enables dynamic content generation that is both contextually relevant and aligned with business objectives.

The integration of RAG enhances the Synthetic Users' utility, ensuring that they serve as effective tools tailored to specific business requirements.

Not digital twins — and why that matters

Digital twins try to clone individuals one-by-one. That breaks down with real audiences: combinatorial explosion, drift, privacy overhead, and brittleness outside observed data. We instead factorize (traits × context × knowledge), calibrate the trait distribution to the organic world, sample cohorts from that calibrated space, and compose behaviors that generalize and update instantly when any factor changes. For the deeper dive, read Synthetic Users vs Digital Twins:

https://www.syntheticusers.com/science-posts/synthetic-users-vs-digital-twins

Extras

For more on bias read this.

The quality we are known for.

This architecture underpins the generation of diverse and insightful Synthetic User interactions, supporting nuanced data analysis and decision-making processes.

This is the types of data that are ingested by Large Language Models.

Releated Articles

More articles for you

The Lie We Tell Ourselves About Customer Research

Most research asks what people say. The problem is people don't do what they say. This piece breaks down the gap between stated and revealed preference — and why behavioral modeling, not better interviews, is how you close it.

Two ways to run research with Synthetic Users and why the difference matters

Iris, what is the difference of using agents to accelerate research.

Synthetic Users vs digital twins

You don’t need a twin for “a parent in rural Ohio who shops weekly at Walmart, prefers fragrance-free, and has a toddler with eczema.” You sample a parent profile with relevant traits and constraints, add retail and dermatology context, and generate behaviors consistent with both.

Two major papers. One shared direction.

LLM-powered Synthetic Users have crossed from concept to validated method. This proves they can predict human behavior accurately, letting teams run fast, low-cost behavioral experiments without replacing real participants.

Gartner says we lead. That's kind of them.

Gartner’s latest report on AI-powered synthetic user research cites Synthetic Users as a leader.

Introducing Shuffle v2

Shuffle v2 is a feature that intelligently shuffles between multiple large language models via a routing agent to produce more realistic, diverse Synthetic Users with better organic parity.

Chain-of-feeling

Synthetic Users use a “chain-of-feeling” approach—combining emotional states with OCEAN personality traits—to produce more human-like, realistic user responses and yield richer UX insights.

Generative Agent Simulations of 1,000 People

A paper that thoroughly executes a parity study between Synthetic and Organic users.

21 Peer reviewed papers that support the Synthetic Users thesis

Here is a compilation of all the papers that help make a case for Synthetic Users.

Why we shuffle between models — to ensure both parity and diversity!

Synthetic Users balances aligned and unaligned models to maintain diversity and authenticity in simulated users while ensuring ethical standards and user expectations are met.

Latest press articles for Synthetic Users

Synthetic Users and AI are transforming research methodologies, offering innovative, cost-effective alternatives to traditional human subject studies.

Comparison studies. The opportunity lies in the deviation.

When we compare different studies, especially looking at what synthetic (artificial interviews) and organic (real-world interviews) data tell us, we often find they mostly talk about the same things but there's also a bit where they don't match up. This gap is super interesting because it's like finding hidden treasure in what we thought we knew versus what we might have missed.

How we deal with bias

Harnessing the power of AI in our Synthetic Users, we strive for a balance between reflecting reality and ethical responsibility, ensuring diversity and fairness while maintaining realism.

The transition to Continuous Insight

The transition towards Continuous Insight™ aligns research activities more closely with the dynamic needs of the business and ensures that product development is continuously informed by up-to-date user insights.

The Art of the Vibes Engine

Large language models (LLMs) like GPT-4 serve as powerful "vibes engines," empathizing with diverse groups and generating contextually relevant content. Their applications span market research, customer support, user experience design, and mental health support, offering invaluable insights and personalized experiences. While not infallible sources of truth, LLMs enable creativity, personalization, and connection within the realm of human language.

There is a faster and more accurate way to do research. Use Synthetic Users.

How Synthetic Users is changing the research process.

The wisdom of the silicon crowd

In the light of an ancient parable, we explore a new paper that dives into how ensembles of large language models match the prediction accuracy of human crowds. It reveals that combining machine predictions with human insights leads to the most robust forecasting results.

Three research papers that helped us build ❤️ Synthetic Users

For the sceptics amongst us who need more tangible research in order to engage with this brave new world. Full disclosure: we are part of the sceptics.

What is RAG and why it’s important for Synthetic Research

Ahead of our RAG launch we explain Retrieval-Augmented Generation (RAG) and how it enhances Synthetic Users by providing increased realism, contextual depth, and adaptive learning, with profound implications for market research, user experience testing, training, education, and innovative product development.

Synthetic Users system architecture (the simplified version).

Foundation models underpin Synthetic Users with advanced capabilities, enhanced by synthetic data and RAG layers for realism and business alignment, all within a collaborative multi-agent framework for richer interactions.

Saturation score. How do we know how many interviews to run?

Determine your interview target for achieving topic saturation using our efficient approach, leveraging the historical wisdom of research pioneers. This method ensures deep insights with theoretical sampling at its core.

How Synthetic Users are gaining depth

Synthetic Users are evolving to address criticism about their generalist nature by incorporating representative data sets and personal narratives.

How we compare interviews to ensure we improve our Synthetic Organic Parity — 85 to 92%

How do we know we are right? How do we know our Synthetic Users are as real as organic users? We compare.

Synthetic Users: Merging Qualitative and Quantitative Research, in seconds.

At Synthetic users we are blurring the lines between qualitative and quantitative research. Here's how we are going about this transformative approach.